I remember the day I first attempted to get my Aadhaar identification card made ten years ago. Every resident in my housing complex had been informed by the neighborhood association to show up, and we obliged. The summer never felt oppressive then, even in the thick of a crowd at a government-school maidan in the borderlands of Delhi. I could stand outside, sweating relentlessly without feeling the slightest discomfort. At some point the queue moved ahead, and I was shuffled into a small, dark classroom with desks joined together and a blackboard painted on the wall.

We will take your photo and fingerprints, but something is wrong with the retina system, so you will have to come again to fill in the details.

Like a marionette, I did what was asked. Pressed my thumb sharply on a small machine illuminated by a green light, stared into the beady eye of a camera propped up on a monitor. I was given a slip and requested to return next week. I never got around to it.

The second time I attempted, successfully, to get my Aadhaar card was several years later. This time, the process led me to the swanky, carpeted, air-conditioned offices of a private firm in Delhi’s prestigious neighborhood of Barakhamba Road. The government had outsourced the Aadhaar data collection to private firms, with guidelines on how to enroll persons in the database. Before I could blink, I was told the process was complete. My Aadhaar would arrive in a password-protected PDF in my email inbox. I was free to go.

Between the year I stood indifferently in a dilapidated school on the edge of the city and this, Aadhaar remained de jure a voluntary document to be procured at individual discretion. But de facto, it became a necessity to access many bureaucratic and banking services in India. After weeks of being roadblocked by district offices and private banks, I relented and found the center that was known to issue them the fastest.

Introduced by the government of India in 2009, the twelve-digit identity number known as Aadhaar is assigned to all residents of India. Our personal data is compiled in the Central Identities Data Repository, creating the largest database of biometric information in the world. (Aadhaar is also referred to as UIDAI, or the governing body Unique Identification Authority of India.) Software industrialist Nandan Nilekani was appointed UIDAI’s inaugural chairman, and in 2010 over 220 private firms were enlisted for biometric-data collection. The status of Aadhaar as an identity document has been uncertain and contested—while it is not a sufficient proof of citizenship, it is used as a proof of residence. Public enquiries have revealed the existence of significant fake or fraudulent Aadhaar ID numbers, reports of database breaches are frequent, and over forty-nine thousand certified agents have been blacklisted for fraud. Simultaneous to such concerns, enrolling in Aadhaar is mandatory for all persons residing in India who do not hold other citizenship, as is the submission of Aadhaar in banking, telecom, and other customer-verification processes. In 2021, Aadhaar was one of six accepted forms of identification required to register with the government’s COVID-19 vaccination portal, developed to manage the country-wide administration of doses by public and private agencies. Still, many citizens who chose to register using other documents on the portal were informed that Aadhaar identification was mandatory to receive the dose.

At the time of this writing, the government has amended a set of rules from 1960 (known as the Registration of Electors Rules) to mandate that database members provide proof of their Aadhaar details in order to register to vote. The Internet Freedom Foundation found last year that Booth Level Officers, who manage polling on the ground as part of the Election Commission (EC), have begun paying visits to citizens demanding that they link their Aadhaar numbers and their voter IDs; officers also reportedly said that failures to comply would result in the removal from electoral rolls. Such a requirement contravenes one of the founding principles of the Indian constitution: universal adult suffrage. After being asked for clarification, the EC maintained that providing one’s Aadhaar card remains voluntary. Yet, doing so effectively remains mandatory, and on the online portal for voter registration, there is no option to opt out of filling in Aadhaar details. Touting “verification” as the reason for linking Aadhaar to voter databases, the EC overlooks the troubling history of the deletion of hundreds of thousands of names from voter databases, which stemmed from the failure of both the administrative process and the biometric technology of Aadhaar in enrolling many. This erasure in the voter list led to voter suppression in the state elections of 2018.

Unlike Duchamp’s claim of “License to Live”—the title of his entry in a 1947 issue of the Surrealist publication Acéphal—you can exist without an Aadhaar, but you cannot function for too long without it. From registration of birth to certification of death, Aadhaar is mandated at every step.

*

When the password-protected PDF bearing my Aadhaar arrived in my email inbox, I saw that it had the picture of me taken years ago in that dingy classroom. My head was tilted, as it always is. My face is grainy, and shadows from the poor lighting that day obscure my features. There is no background in the picture; my head has been poorly cut out from that day, sliced from the classroom with a jagged line that traces the shape of my hair and neck. I had a geometric, short-lived hairstyle then, which others around me chose to describe as unfortunate. Looking back, I was a bit of an odd melon that summer, yet to fall into adulthood and hanging onto the world of innocence. Today, the roughness of this picture sits uncomfortably in a world of high-definition cameras and portrait modes on phones, and in the domain of ultra-codification that is the passport photo, with many countries specifying errors in composition (size, background color, visage, or torso) as a basis to reject applications. The history of identity documents is replete with sharply tailored images taken on street cameras—the pristine outputs of studio photography. Within this history, the jumpy, unfocused picture on my Aadhaar is an embarrassment.

My Aadhaar photo is also the hallmark of “modern documentarism,” which Hito Steyerl describes as an order different from earlier and, possibly, subsequent modes of representation. The lack of focus and “uncertainty” of nascent digital images and web feeds embodied an expressionistic quality, which rendered them authentic in a way that differed from conventional understandings of the documentary, which had aspired to cohesion and consistency and, crucially, an attempt to form an indexical relationship with its environment. These images of new documentarism, on the other hand, may bear loose similarity or, as Steyerl argues, “no similarity to reality; we have no basis for judging whether reality is being shown here in any objective way.” Yet the grainy jaggedness of this output—quite distinct from the sfumato-like beauty of today’s grungy filters—was real enough. Despite holding “little else than their own excitement,” these trembling images are considered authentic precisely because of their high celerity and low clarity.

This charged mode of representation can get under your skin in a way quite different to the affective mode of the analogue, which dealt with the aura of imprints, residues, and photochemical mutations—transformations that brought forth in the celluloid a rare connection between its particularity and an archetypal form of photographic sight. In the absence of a stable iconicity or the ur-image, documentarist images are temporally bound to a hyperspecific radius of generation, but their affective current connects to a larger stream of images that move at dizzying velocity, jumping through hoops of lossy compression. The camera here is in the thick of whatever it is capturing—it is on the run, it is submerged, it is bathed in the same darkness as the classroom with only one source of light. And it arouses in the viewer a proximal thrill, a surge of spectatorial witnessing; an affective charge that is renewed time and time again by the ceaseless proliferation of such images.

The uncertainty that Steyerl ascribes as characteristic to this new order of images corresponds to the “precarious nature of contemporary lives as well as the uneasiness of any representation.” Yet precarity and visibility are not socially or politically distributed in even measures. Steyerl’s discussion of the uncertainty of representation that marks modern documentarism begins with the analysis of a news feed relayed by a reporter from atop a military tank during the US invasion of Iraq in 2003. Contrarily, shaky, unsteady “feeds” are now the primary form through which we consume acts of live reporting, sousveillance, and documentation of mass protests or acts of civic resistance to dominance or discrimination. Simone Browne describes these acts of recording power or subverting the surveillant gaze as “dark sousveillance,” functioning to invert the “permanent illumination” and hypervisibility of race that undergird surveillance structures.

Browne understands surveillance as a triage that produces, targets, and reinforces race through visuality, biometrics, and identity documents, while dark sousveillance is a group of “freedom practices” devised by surveilled and racialized bodies to evade or operate fugitively within the matrix of racialization. Kelly Ross, discussing Browne’s book Dark Matters: On the Surveillance of Blackness, observes that—while surveillance is the method of racializing bodies by making them visible, legible, and trackable—dark sousveillance implicates the body in other ways, through either fugitivity, such as wearable and personal cameras, or a discordant visibility. Modern documentarism of dark sousveillance couldn’t be further from the grainy news feed of CNN or the weak capacity of the camera used in that dark Delhi classroom, both of which determine and fix, however hazily, the bodies that meet them. Dark sousveillance, on the other hand, is firmly rooted in precarious, vulnerable, and marked bodies and the environments of their movement and debilitation, with odd and choppy angles, interruptions, and the instability of a combative encounter woven in.

In India, the surge in the popularity of new documentarist technologies such as the webcam or cellular phones with cameras coincided with the advent of Aadhaar’s documentary regime. When the system launched, a network of private enrollment centers for Aadhaar sprung up through the country. The technological requisites for facilitating enrollment were a biometric device, an iris scanner, a webcam, and computers for data entry. The project was dependent on achieving scale for the database to hold any value, and it had to achieve it with rapidity. In its path toward scale and speed, Aadhaar merged technologies with vastly different genealogies in the subcontinent: the fingerprint, the earliest use of which for bureaucratic/state reasons were in nineteenth-century colonial India, and the webcam and iris scanner, which in the aughts were considerably limited in their availability.

Aadhaar is like most things digital—in Brian Massumi’s words, “sandwiched between an analog disappearance into code … and an analog appearance out of code.” The first step to getting an Aadhaar card is to fill out a form. After the final step, the card exists for the most part as a printed document, often submitted by people as hard copies to concerned agencies. In between these states of creation and emergence, there are sufficient loopholes that allow for the proliferation of fake cards, as news reports discover every few weeks. Easily replicable in design, verifiable by no singular authority, and bearing a string of numbers, a webcam portrait, and a barcode, the card is pliable to forgery and manipulation in the “twisted, contortionist” ways of the analogue. Beyond concerns of fraudulent cards and the vulnerability of the database, projects such as Aadhaar propose a distinction between “identity and identification”—the former an amalgamation of social relations and historical processes, and the latter touted as a neutral act of correlating one piece of information to another. As Ursula Rao notes, the idea of a bias-free system falters when one recognizes that any “new technical routine always relies on the fleshly-embodied subjectivities of people who witness, judge or adjudicate identity.” Rao’s analysis of Aadhaar’s accessibility for enrollment in Indian cities suggests the concept of “political ecologies” as described by Mahmoud Keshavarz as “the dynamic relations that are produced with various forms of articulated things.” Drawing from the conceptual foundations of biopower, Keshavarz’s work explores the infrastructural formations that produce networked relations of control as the amalgamation of “scientific knowledge, technical apparatuses, anthropological assumptions, and architectural forms in strategic ways.”

Diverging from existing frameworks of establishing identity such as Voter ID cards, passports, and driver’s licenses, which require multiple tiers of verification by agents, an Aadhaar requires the “person” of the citizen only in the first step: the habeas corpus. Once a person has submitted a preexisting identity document or an official letter vouching the same, and biometric information has been collected, the software used by Aadhaar conducts de-duplication and triangulation against other entries in the database to verify their identity. This process is described in a manner that emanates a sense of infallibility.

Rao conducted fieldwork at Aadhaar centers in New Delhi, and her findings show that enrollment is contingent on a matrix of “social techniques for recognition that make people visible to the state by placing them in accepted and culturally-meaningful contexts.” The set of documents required to enroll—proof of birth and proof of residence—can be especially challenging for migrants to the city to produce, as these are often not required in peri-urban or rural contexts. And when the documents are in place, the body can falter. As grueling manual labor calluses and roughens the hands of millions in the subcontinent, the fingerprint machine fails to read their effaced prints. This failure to read fingerprints—the technical term for which is “failure to capture”—of those engaged in physically laborious tasks has led to the exclusion of many from access to critical welfare schemes such as food rations. That bodies today exist in the pale of their documentary forms demonstrates a processual inversion by which it is a document’s “authenticity that is checked and compared to our body and not vice versa.” To be deficient in machine readability leads to the institutional denial of bodily existence.

Failure to capture can lead to failure to enroll, and individuals stranded at the borders of the database find themselves situated there due to their variance from institutionally enforced ideas of bodily normativity, whether at birth, through chance, social processes, or, as Sanneke Kloppenburg and Irma van der Ploeg suggest, through the enaction of the biometric process itself. The latter is an important thread to hold in this discussion: by building a baseline that takes a certain dataset as normative, biometric systems translate bodies into essentialized differences. The failure of the machine to read a body inscribes difference as intrinsic to the body. Such approaches, according to Kloppenburg and van der Ploeg, “implicitly assume that the performance problem is with the users and not with the biometric technologies.” Rachel Douglas-Jones, Antonia Walford, and Nick Seaver argue that data is not given, which Vijayanka Nair extends to the realization “that one is not born, but rather must become, data.” Becoming data, or a “stable object” removed from the rigidity of social subjectivities and the flux of temporal progression, is a process that further inscribes and fixes identity into categories, and denies the possibility of mobility, fluidity, or transgression.

I first encountered poet Essex Hemphill’s 1995 lecture “On the Shores of Cyberspace” in Legacy Russell’s Glitch Feminism. Writing beyond the virtual awning of the internet that promised liberation, Hemphill asked:

Hemphill’s lament, which is directed at the vaster terrain of cyberspace, is particularly resonant with the infrastructure of biometrics. In Sorting Things Out: Classification and Its Consequences, Geoffrey C. Bowker and Susan Leigh Star suggest the term torque to describe the dissonance that occurs when “the ‘time’ of the body and of [its] multiple identities cannot be aligned with the ‘time’ of the classification system.” Torsion is an effect of classificatory systems that work within either a binary mode (which Bowker and Star frame as “Aristotelian”) or prototypical mode (a gradation of variance from the norm). The authors bring up a near-universal feature of datasets—the option of choosing “none of the above,” which is closer to the prototypical function than a yes-or-no format.

When not relegated to the shores, bodies that resist normativity can be shuffled into an empty category, the “residual” pile, a flatland where all that is solid melts into air. Nair studies how the aspirations of Aadhaar, which are to build a database of individuals as separate from community affiliations, falters even before the system can begin. Embedded in Aadhaar’s “conditional” yet regularized demand for demographic data, including proof of relation, is the assertion of kinship and social location. In becoming data, the person is laden with the weight and implications of their social existence, shrouded in its textures of dominance and exclusion.

Ranjit Singh and Steven J. Jackson describe the transfer of social location and barriers onto the Aadhaar database as the creation of “high resolution” and “low resolution” citizens. The latter is quite literally “hard to see” through the miscategorization, unavailability, or residual nature of their data. High-resolution citizens, on the other hand, experience ease in adopting and integrating technologies of surveillance and data collection into their lives, whether due to economic and/or socioreligious advantages. In the framework of torsion, Bowker and Star describe this category as: “The advantaged are those whose place in a set of classification systems is a powerful one and for whom powerful sets of classifications of knowledge appear natural.”

It is at this juncture that the distinction between race and technology and race as technology—with its function as a “mapping tool” with an “ability to link somatic differences to innate physical and mental characteristics,” as discussed by Wendy Hui Kyong Chun—becomes pertinent. Citing Samira Kawash on how race as technology correlates “bodily sign of race” with “interior difference,” Chun writes that whether understood through biology or culture, race “organizes social relationships and turns the body into a signifier.” She cites Ann Laura Stoler’s description of race as the technology that creates, mediates, and enforces “sets of relationships between the somatic and the inner self, the phenotype and the genotype, pigment shade and psychological sensibility.”

Isabel Wilkerson describes the distinction and conjunction between race and caste in Caste: The Origins of Our Discontents—a book that lacks an exploration of caste as an urgent issue in contemporary India—as contingent on the linking of race with sight as the technology of epidermis, and caste with inheritance of rank, the technology of eugenics, with an end toward subjugation of difference. Wilkerson explains: “Race is what we can see, the physical traits that have been given arbitrary meaning and become shorthand for who a person is. Caste is the powerful infrastructure that holds each group in its place.” Singh and Jackson’s description of infrastructure, the term evoked by Wilkerson to describe caste, serves to better understand the continuity and persistence of caste in India as the often invisible ground that orders objects and places them in relation to one another. They write: “Infrastructures are both relational and ecological: different groups impute different meanings to their function and differentially experience their emergence through time … Infrastructures are therefore neither fixed nor given, but always in a state of becoming.”

The technology of caste that manifests in ideologies of contact as pollution, infrastructures of segregation, and politics of domination functions in the ways that while the effects of caste power are clear, its naturalization is less apparent: “less invasive, less visible, more encompassing and ubiquitous,” as Keshavarz writes. While reading Hemphill’s lecture, I was struck by another cry from a different place and time—Rohith Vemula’s final letter. Vemula, a research scholar at the University of Hyderabad and a Dalit activist, was persecuted by the university administration for his participation in the student activist group Ambedkar Students’ Association. Vemula died by suicide in January 2016, a year after the university had discontinued his scholarship and mere months after he was suspended. Vemula’s death has been condemned as institutional murder, laying bare the stranglehold of caste in contemporary India. In his final letter, Vemula wrote: “The value of a man was reduced to his immediate identity and nearest possibility. To a vote. To a number. To a thing. Never was a man treated as a mind. As a glorious thing made up of star dust. In every field, in studies, in streets, in politics, and in dying and living … My birth is my fatal accident.”

In a chapter titled “The Fact of Blackness” in Black Skin, White Masks, Frantz Fanon describes the condition of blackness as a bodily dislocation that is produced in relation to whiteness: “I came into the world imbued with the will to find a meaning in things, my spirit filled with the desire to attain to the source of the world, and then I found that I was an object in the midst of other objects.” This dislocation is followed by a gesture of a somatic fixing into genealogical place—a place of otherness: “And already I am being dissected under white eyes, the only real eyes. I am fixed. Having adjusted their microtomes, they objectively cut away slices of my reality.”

This slicing away of reality conveys the corporeal, phenomenological, and tactical ways that systems of dominance can position and orient bodies. The idea of orientation, proposed by Sara Ahmed in the context of queer and feminist orientations, is the shaping of the world for bodies through repetitive contact with certain objects (material and immaterial). It is a state of flux where objects and experiences shuffle from the foreground to the “background” as bodies orient themselves. Ahmed writes that “being directed toward some objects and not others involves a more general orientation toward the world … If orientations affect what bodies do, then they also affect how spaces take shape around certain bodies. The world takes shape by presuming certain bodies as given.”

As Ahmed describes how heterosexual orientation produces “straight tendencies” as a way of moving in the world and moving the world along straightness, and how the “slanting” of queer bodies in environments designed and marked for heterosexuality is always to be corrected, I am reminded of B. R. Ambedkar’s autobiographical work, Waiting for a Visa (1990). In the book, which arranges episodes on experiences of caste discrimination, we are made aware of how the mobility and place of a body marked by racial difference and caste status is prohibited, constrained, and/or staggered spatially. Orientation understood as a “straightening device,” when extended to the infrastructure of caste, tells us how bodies are moved along trajectories of ease or discordance, with the orientation of the world pressing into the slanted body. In one vignette, Ambedkar recounts that upon returning to the city of Vadodara after studying abroad, he is hesitant to call on his erstwhile associates and friends, unsure if they will be comfortable offering him a place to stay. No Hindu inns will have him, and so he approaches a Parsi inn (also exclusive to the community), working out an arrangement with the innkeeper until he is driven out and evicted with no notice. Left with no place to stay in Vadodara, Ambedkar sits in a public garden, Kamati Baug, for five hours, waiting for the train to Bombay to arrive. As I follow Ambedkar’s distressed movement from one place to another—until he reaches a moment of stillness at the public park bench, after making the decision to leave Vadodara—Ahmed’s analysis on “social action” citing Marx and Engels stating that the trajectories of bodies are not simply given but take shape through “the activity of a whole succession of generations,” leading to Ahmed’s insight into the “‘sedimentation’ of that work as the condition of arrival of future generations” rings as critical to the understanding of the spatial ecology of caste.

I bring up Ahmed’s concept of orientation, or how bodies are aligned with their environments, not only to highlight how the material and affective ecology of surveillance can accrue in the psyche and body—such as through everyday acts of profiling, obstruction, and curtailment of racialized bodies, and with it the incumbent feelings of anxiety, frustration, and anger—but also to consider the orientation of biometric entries as bodies within datasets. Take for instance, the logic of likeness that biometric datasets rely on as the preferred methodology for testing the authenticity of an identity. Kloppenburg and van der Ploeg describe this process in two stages: First, at the instance of enrollment, a biometric template is generated that stores the collected sample in the form most amenable for pattern-recognition algorithms. Following this, the person undergoes biometric verification through a probe that compares the features presented at this moment with the template stored in the database. The comparison score generated during the probe determines the authenticity of the person’s identity. The logic of these algorithmic tools can be described as homophily—a concept introduced by Chun in the edited volume Pattern Discrimination. Homophily, or love for the same, describes the impulse that drives networked infrastructure, built on recognizing data through its degree of similarity or difference, and further using “differences as a way to shape similarities.” Borrowing from Phil Agre, Chun describes such networks as “capture systems” based on how algorithms produce a “grammar of action”—sets of action and inaction that can yield vastly distinct outcomes for those “read” differently.

Chun’s concept of homophily is particularly apposite to understanding the entanglement of biometrics with caste in India. Through the recent scholarship of Simon Michael Taylor, Kalervo Gulson, and Duncan McDuie-Ra, we can see the arc that connects the birth of statistical sciences in India to similarity functions that guide profile-matching algorithms like facial recognition and other machine learning today: the Mahalanobis distance function, commonly described as a statistical similarity measure. The distance function is used to “locate outliers to a set of data,” where degree of similarity or divergence is used as a metric to ascertain the accuracy of truthfulness of entries and their membership to a group. Taylor, Gulson, and McDuie-Ra demonstrate the abiding principles of caste domination and racialized imagination that inform and continue to animate the Mahalanobis function, which is widely perceived as “neutral.” Introduced in 1936 by P. C. Mahalanobis, the distance function distinguished populations into castes, tribes, and other communities based on a 1920 census dataset of the province of Bengal collected by the Zoological and Anthropological Surveys of India. It was developed with the express intent to “uncover the essence of caste, to abstract those defining features on the basis of which castes across the regions could be identified, counted, compared, and classified.”

Mahalanobis’s enduring interest in racial classification and the use of statistics to develop biometrics—to track what he called the “caste distances” between multiple social groups—aimed to trace the degree of likeness, or what is today called the comparison score between two social groups. Much of Mahalanobis’s work in the 1930s was dedicated to affirming the accuracy of the data collated by Herbert Risley, the inventor of the notorious “nasal index.” The authors cite Projit Bihari Mukharji’s research on the profiloscope, a tool developed by Mahalanobis to categorize persons based on portraits and visual data, and, as Mukharji writes, “sought to capture the racialized type-identity without losing the individualized identity.” Mahalanobis was thus interested in creating genres of population, and in transcribing the biometric fixity of individual bodies to datasets: his methodology also tracks a shift from the measurement of skulls to the use of photographs to affirm the social location of living subjects through biometric measurement.

The resilient hands of countless workers that refuse to be read by machines point us to the gyre of history, where the limits of anthropometry, empire, and caste coalesced into a new regime of control: the fingerprint. The history of the fingerprint as an identification tool arches to Jungipoor, Bengal, in 1858, when William Herschel, a revenue official of the East India Company, coerced a local, Rajyadhar Konai, into providing a handprint on a document. The handprint, initially taken for purposes of intimidation, became part of a sustained scheme by Herschel, who was also an avid astronomer and statistician. He collected countless handprints over time, realizing their consistency and uniqueness of pattern. It was these extractions of handprints by colonial subjects that formed the basis of eugenicist Francis Galton’s development of criminal identification, which was widely adopted. Nicholas B. Dirks describes the empire as “an important laboratory for the metropole” in matters of classification and control. The discovery of fingerprinting as a method to identify colonized subjects produced a discourse of efficiency and ease not dissimilar to what Aadhaar promised—including, revealingly, the inefficacy of Konai’s fingerprints. After receiving a copy of Konai’s fingerprints from Herschel, Galton projected them during a presentation to the Royal Society. His rival, Henry Faulds, pointed to the blurring at Konai’s fingertips in comparison to the fine trace of the palm to discredit the first-ever successful attempt at developing a system of fingerprinting.

Dirks describes the emergence of fingerprinting within the paradigm of anthropometric discourses that sought, in the words of influential civil servant Henry Cotton, “not to destroy caste, but to modify it, to preserve its distinctive conceptions, and to gradually place them upon a social instead of a supernatural basis.” He continues: “Fingerprinting was considered error-free, cheap, quick, and simple, and the results were more easily classified. By 1898, [inspector general of Bengal Edward Richard Henry] wrote that ‘It may now be claimed that the great value of finger impressions as a means of fixing identity has been fully established.’”

The extant handprint of Konai, which is now part of the Francis Galton Collection in London, was developed into a video palimpsest with digital animation by Raqs Media Collective as part of the installation The Untold Intimacy of Digits (2011). Purposefully disintegrating the handprint not through numeric code but fragmented text that ties into contemporary concerns with identity and colonial power, the video alerts us to the long shadow of caste and control that continues to shroud the present. Even as “nasal indices” gave way to fingerprints and new “disciplines, like population genetics, replaced older ones like physical anthropology, and an essence-based idea of ‘race’ was replaced by a frequency-based notion of ‘population,’” as Mukharji writes, “through all of this change a fundamental idea of inheritable, group-based, somatic difference was retained.”

Taylor, Gulson, and McDuie-Ra caution that such ideas of racial difference have not withered away, even as these technologies have transformed or traveled to varied contexts and applications. The distance function continues to operate as a “technical standard … it propels a trajectory of racialized techniques of machine learning applications, while hiding its discriminatory potentials.” The use of the Mahalonobis function to measure likeness and group divergence in facial datasets, for example, can be found in applications as wide-ranging as identifying “unproductive or fatigued worker … or identifying ethnic minority faces in a crowd.” The authors note that the burgeoning of facial-recognition technology in India is worrisome for its easy transferability to territories already under exceptional laws such as the Armed Forces (Special Powers) Act, which allows profiling as adequate grounds for capture and detention by the deployed military. That such processes of control will be further automated and accelerated with the application of facial recognition technologies is evidenced in the profiling and apprehension of protestors and dissenters in India that has been reported, and in its deployment to screen and track persons attending political rallies.

The material base and epistemological foundations of machine-learning and recognition technologies today rests on nineteenth-century racial anthropometrics, developed in contexts of colonial and upper-caste control. In India, it circles back to the use by dominant groups that control ideological and repressive state apparatuses against religious minorities and non-upper-caste persons. Mukharji reveals how upper-caste, late-colonial subjects, who would go on to shape institutions of learning and research in newly independent India—especially sciences of enumeration and predictions such as statistics—revived an interest in early colonial anthropometry. This revival was driven by a “biometric nationalism” that held caste hierarchy as a necessary basis for identity and social outcomes. From the census emerges the “universal database,” the apparent and spectral forms of surveillance, mandated, embodied, and repeated—a historical process summarized by Alexander Galloway in his 2004 book Protocol as “biopower,” which is “a species-level knowledge” in the same way that “protocol is a type of species-knowledge for coded life forms.”

Analyzing the coded materiality of the passport as a document, which now bears both a printed image of the person’s face and a chip encoded with a high-resolution facial image, Liv Hausken cites Patrick Maynard in arguing that the biometric photograph does not simply depict a person but is also “registering, inscribing, recording” to arrive at a system of detection. Aadhaar, which uses an iris scan and thumbprint along with a printed image, is different from the passport, which uses photographic biometry to develop a process of machine recognition and hence has a more defined set of rules for the composition of the photographic image. The elaborate rules of the passport photo—pertaining to cropping, background color, and facial expression—are developed for a metric standardization that can be read by machines. This picture printed on the surface document also now exists as a high-resolution JPEG stored in a chip that is affixed to the document. To be made machine-readable through this photographically generated biometric data is a phenomenologically different process from the order of barcodes to which the iris and thumbprint belong.

Even as photography percolated into administrative use in the early twentieth century, a detailed bodily description—a set of information describing the body through a classification system that drew from modes of colonial knowing—continued to be included in documents asserting identity across the world. Despite the inclusion of photo portraits in twentieth-century passport documents, they were not accepted as sufficient or “reliable enough,” and a list of features to capture the specifications of the document bearer, including age, height, and the descriptions of nose as “large, straight, roman” continued to be included. These technologies of visibilizing the body through the description of its markers has roots in both the materialization of slavery in the Americas and colonial control entangled with caste hierarchy in South Asia. Mahmoud Keshavarz notes that the role of fingerprinting, which was used in passports alongside photographs in 1916, was “a product of economic exploitation, free labour and enslavement.” He traces the resistance to fingerprinting in the issuance of passports in France in the early twentieth century, writing that it was viewed as a form of “dehumanisation,” linked to “documenting and indexing criminals as well as the colonised populations who in the eyes of French publics were not considered human.” Photography, on the other hand, held a representational axis that situated it closer to art and the bourgeois society than criminal sciences—a distinction that Patrick Maynard terms as the one between depiction and detection, in which representation is a mode of control that can lead to complete erasure.

Here, I take a detour to think about the face, or, rather, faciality, which is quite different from the constitution of the fingerprint. In A Thousand Plateaus: Capitalism and Schizophrenia, Gilles Deleuze and Félix Guattari introduce the notion of faciality: in that the face is apart from the body and the head, “faces are not basically individual; they define zones of frequency or probability.” After positing the face as a landscape (and even compounding the two words), Deleuze and Guattari arrive at a crucial understanding: “Certain social formations need face … and the relation of the face to the assemblages of power that require that social production. The face is a politics.” This is a turn away from face understood either as a site for visibilization or as a moment of ethical encounter. To describe this concept, Deleuze and Guattari imagine the face as holding two aspects—a central computer that comprises the elements of the face and the abstract machine of faciality, which adjudicates where the surface (imagined as holding holes and walls) passes for a face. Tellingly, for the authors, the operation of the machine of faciality constructs racism, not by measuring degrees of deviance from the “White-Face,” but by erasing difference: “Racism never detects the particles of the other; it propagates waves of sameness until those who resist identifications have been wiped out.” I am keen to think about this mode of erasure, not so much as removal of marks but as something like frottage—bearing traces of preceding personhood but steadily obliterating autonomy until the body is eclipsed by the face. Deleuze and Guattari describe this movement towards faciality in the language of invasion: “The face is produced only when the head ceases to be a part of the body, when it ceases to be coded by the body, when it ceases to have a multidimensional, polyvocal corporeal code—when the body, head included, has been decoded and has to be overcoded by something we shall call the Face.” The movement described here isn’t one of disembodiment but of facialized embodiment—the development of a surface that can wrap the entirety of the body or being until it can be sorted in the binary of legibility and discordance.

Jennifer González notes that on platforms or networked spaces, the face does not exist as a singular entity but is always placed in a chain connected to other faces—Facebook, for example, functions on connecting and expanding this chain of faces. Amsterdam design and research studio Metahaven’s projection of an imminent, if not already present, mode of capital control over society through data is called Facestate, an amalgam of Facebook and state. The Facestate is libertarian utopia; nothing exists in the plane of the public.

Considering Maria Fernandez’s arguments in her essay “Cyberfeminism, Racism and Embodiment” (2002) that race, while constructed through ideology, is enacted in embodied modes such as interactions and gestures, what possibilities does a racialized body hold when positioned as a data file or face-state? In the essay “A Thing Like You and Me,” Steyerl poses what appears initially as a rhetorical question: “Ask anybody whether they’d actually like to be a JPEG file.” The practice of becoming a fixed image on the document has to do with the materiality of it, with “image as thing, not as representation. And then it perhaps ceases to be identification, and instead becomes participation.” I think about this in conjunction with how Singh and Jackson describe the conceptual category of “resolution” as “an analytic resource to map the making and management of difference enacted by data infrastructures in everyday lives of data subjects. … The politics of resolution is deeply intertwined with the prescribed purpose of the infrastructure that produces it.” If the face wraps the body to the point of its concealment, what comprises the body of this face data?

In The Interface Effect (2012), Alexander Galloway calls these participatory transfers “non-optical interfaces,” which are often overlooked in the discussion on network infrastructures. The webcam and iris scanner in the Aadhaar project created an optic regime based on what Matthew G. Kirschenbaum describes as the journey of digital images through “inscription, object, and code as they propagate on, across, and through specific storage devices, operating systems, software environments, and network protocols.” The digital image accumulates a series of marks and traces. The assembly of these impressions on the image file recasts it not as an ephemeral, weightless specter but as a material entity, which can determine material consequences. To the forensic eye, the life cycle of an image is writ unto its constituting file—which can falter for “low-resolution” citizens, such as those suffering from cataract or corneal scars due to nutritional deficiencies. Lev Manovich describes this attribute as the “computer layer,” which is separate from but enmeshed with the “culture layer”: “new media which is created on computers, distributed via computers, stored and archived on computers, the logic of computer can be expected to significantly influence the traditional cultural logic of media … the computer’s ontology, epistemology and pragmatics—influence the cultural layer.” The inability of fingerprint or iris-scanning machines to read certain bodies further removes these bodies from the ambit of social welfare.

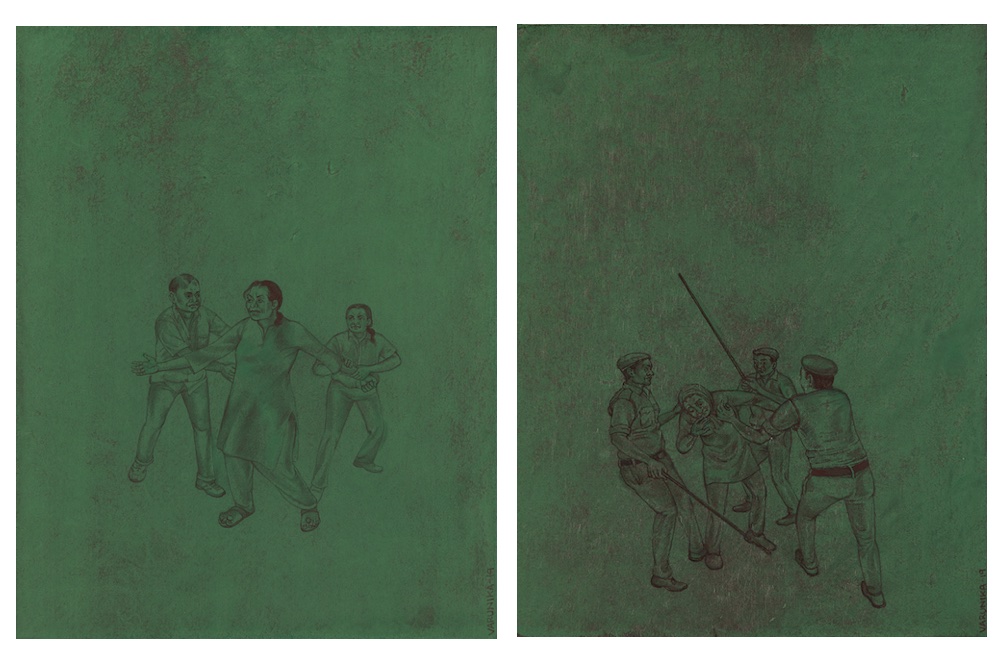

Can paying attention to the hardware, or the material histories of nonoptical objects of extraction and surveillance, reveal modes of life resistance encoded in them? In 2017, Varunika Saraf introduced a new material as the base of her paintings: a synthetic iron-oxide pigment named caput mortuum, which she used in the series Caput Mortuum (2019) and Miasma (2020) to depict institutional violence in India by rendering figures in various bodily positions of duress. Saraf previously worked on depictions of police brutality and Hindu fundamentalist violence in India in a 2020 series titled Miasma, which explored the dispersed atmosphere of policing and harm that now prevails in the country. Selected by Saraf for its resemblance to dried blood, the pigment caput mortuum’s alchemical genus is the residual matter that scrapes the bottom of cauldrons and flasks; specifically, it is the result of unworthy experiments—what remains after all noble elements have sublimated. Translated from Latin, caput mortuum denotes the “dead head,” the residue that symbolizes absolute decay. When applied as the base layer to the paintings, it serves as a grim reminder of the precarious background on which dissident bodies are oriented.

In the visual field of Saraf’s paintings, bodies are pertinacious and clearly defined, with a backdrop of colors serving as context—this could be anywhere in the country because state violence can manifest with agility and impunity, and because the number of political prisoners and attacks on peaceful protestors rise each year. The air is different, and so is the infrastructural support for state-led and state-sanctioned violence.

While Saraf doesn’t identify the persons depicted in her paintings—and it is important to think of this as deliberate—it is interesting to note that the pigment that gives them substance and raises them to sight is associated with the pigment known as nigredo, the alchemical term for putrefaction and “blackness.” Both caput mortuum and nigredo are the result of transmogrification of substance—as two points in the act of material metamorphosis—with nigredo understood as a necessary cleansing of base elements, and capuut mortuum as the ruins of a promise. They signal a process that alters both vision and matter, and they appear as the offspring of negation or transformation—as detritus and dissolution. To stay with these movements toward entropy, putrefaction, and refuse, I want to bring up the concept of dirty data as refusal and glitch.

Do identity databases dream of seamless integration of values? Is the removal of erroneous or insufficient data by perfect algorithms a peep show mixing the desire for purity with the ambition for clarity? In “A Sea of Data: Pattern Recognition and Corporate Animism,” an essay by Hito Steyerl, the reality of dirty data—incomplete forms, lies, and unusual and personal abbreviations—gains a new contour. While dirty data is merely a small roadblock to the smoothness of algorithmic progression, in disturbing the idea of pristine datasets and perfect, continuous data extraction from the world, it can also be, in Steyerl’s words, a site to “record the passive resistance against permanent extraction of unacknowledged labor.” Dirty data is data in the real world. It tells us of fatigued minds, saturated bodies, failing point-of-sale machines, the lack of functioning plug points in school classrooms, and the lethargy behind updating grainy portraits on ID cards. It can also be fictive, presumed, or imagined data, suggested by the flourishing market of dummy cams.

Artist and publisher Nihaal Faizal has been sourcing and ordering dummy surveillance cameras, machines that mimic the form and appearance of a CCTV, even often emitting a beady red light, but that actually capture no data. These nonfunctioning dummy cams demonstrate perhaps most clearly the distributed nature of disciplining in protocol societies. We don’t know whether the maker of the first dummy webcam was a student of Foucault, but they understood quite remarkably that the belief of being surveilled is capable of producing the same behavioral set of responses as actual surveillance would. Faizal has been assembling an installation made of these dummy surveillance cams purchased from markets or ordered from e-commerce websites such as Amazon and Flipkart. The cameras are placed on the floor and mounted on top of their packaging, which proclaim in confident bold lettering words like “security” and “imitation,” even as the empty, scarlet, yet lifeless eyes of these machines continue gazing at nothing. To counter the revolutionary potential of dirty data, there is the oppressive ubiquity of spectral data—that which doesn’t exist but continues to govern our lives.

Steyerl warns us not to place too much hope or emphasis on dirty data as a mode of creative resistance. But in acknowledging it, one understands the spectrum of actions that could comprise what Alexander Galloway calls “life resistance” or ways “of engaging with distributed forms of protological management.” The journeys of two sets of technologies—webcam portraits and iris or finger scanners—may overlap but are distinct, too, with portraits laying the groundwork for punitive facial-recognition technologies to enter the landscape of absolutist governance. Concomitant to the expansion of Aadhaar’s coverage, the technological framework for which was developed by and is executed by private agencies, the government of India has been updating its mass-surveillance assemblage. Although it is listed as a client of Pegasus spyware, it has acquired new algorithmic software for facial recognition of protestors and tracking of dissenters (the software Trinetra was used in the popular protests against the Citizenship Amendment Act, and more recently in the farmers’ protest; Ambis and FaceTagr are a few others).

The shadow of capital has so far fluttered lightly over this essay, implied but not discussed in the imperial projects of extraction and plantation, or the caste system of subjugation and appropriation of labor through classification of work along caste. It is also implied in the accumulation of means of production by upper castes that not simply persists but drives the neoliberal design of India’s economy in the twenty-first century. Mostly, it is evident in the private benefits of the Aadhaar project, which continues to be plugged into every aspect of private and public services. The surveillance economy in India, which is shaped and controlled by global private firms, is missing from many discussions on the development, regulation, and use of technologies of capture.

From the integration of selfies into health and mobility protocols—such as uploading selfies on a government COVID-19 tracker app or for identity checks at the airport—to the use of facial recognition in the arrests made in the 2019 riots of northeast Delhi, the ubiquity of facial recognition technology is insurmountable. The disproportionate deployment of facial recognition on religious minorities, political dissenters, and Dalit persons continues to be affirmed by news reports on the use of facial-recognition software by state police. The Mahalanobis episteme of race as technology shapes how facial recognition is performed by algorithms to match stored images (templated) with query images culled from varied contexts: CCTV footage, live videos, traffic cams, and drone feeds relaying the faces of ethnic minorities in a crowd or zeroing in on slow bodies.

This expanding edifice works with the existing analogous modes of debilitation and control, especially in landscapes of bare life, such as Kashmir. Facial-recognition software works with and beside concertina wires, terrorist acts, legislations giving unchecked power to the armed forces, protocols of stop and search, watchtowers, neighborhood civic surveillance through performances of patriotism, legal instruments such as the Bhima Koregaon–Elgar Parishad case, and so on. In each of these infrastructures of control and debilitation, we can access parts and not the whole of its governing principles. In an enlightening example of how these race-as-technologies collude and bolster each other, albeit opaquely to the sousveillant, alert eye, the facial-recognition software used by Delhi Police has eighty percent efficacy. Since receiving criticism for using a low-threshold-accuracy software to make arrests in the New Delhi riots of 2019, in handling the Jahangirpuri riots of 2022, Delhi Police attempted to boost this accuracy by rounding up the accused and having them recreate the pose they held in the CCTV footage stills.

In The Interface Effect, Galloway provides an expanded definition of interfaces as “zones of activity,” which produce “interface effects.” Thinking of surveillance as an interface that produces volumes of data and, in effect, engenders informational bodies, what are the forms these interfaces are assuming? The liquid state is one analogy—the vast ocean of infinite datasets, the reflective translucency of screens, the “smooth space” of bodies moving voluntarily through turnstiles swiping their travel cards, the flow of information in the internet of things. Joanna Zylinska argues in Nonhuman Photography (2017) that the liquid state allows for the movement between analogue and digital, making photographs and other cultural objects less fixed and more fluid, rescued from the petrification of binaries.

Can the body wading into the waters of data or, to stretch the metaphor, submerged in becoming data yield a counter-position? A possibility of creativity in the life of resistance is the idea of “apophenia,” or the search for a pattern or relation among unrelated or unconnected data, which is what network algorithms aspire toward. For Steyerl, turning apophenia on its head, or “defiant apophenia,” means playing with expected patterns of recognition. The stakes are high: it can “cause misreadings” or “land you in jail.” But so are the rewards: it can offer glimpses of what apophenia could do, when the over-determinism of algorithmic logic is challenged. Steyerl gives the example of George Michael’s 1998 music video for “Outside,” which interprets a homophobic act against Michael by the Los Angeles Police Department into a declarative anthem for sexual revolution, directly sampling news reports of his arrest. Michael, in ways creative and agentic, reclaims both the legacy of the incident and his interpellation as gay.

In throwing patterns asunder and rewriting them, I am reminded of Rahee Punyashloka’s Dhobi Ink (2021). On pale peach-hued paper, four deep-blue dots are arranged in a grid, diffusing as their radius expands into a soft blur. Punyashloka, an artist who belongs to the Dhobi community, used the pigment known in the Indian subcontinent as dhobi ink—a vibrant shade close to indigo and made from the fruit of Semecarpus anacardium (referred to, unsurprisingly, as dhobi nut), which holds a black resin. The pattern in which the dots appear, two grids of two dots each, is one followed by generations of laborers from the Dhobi community, who perform acts of washing and cleaning of linens and cloths in the Hindu caste hierarchy. Punyashloka, whose artistic, scholarly, and literary practice is anchored in studying, disseminating, and iconizing anti-caste and Dalit activism and writing from India, reclaims the perception of the four dots and the ink used to make them by presenting a new possibility for reading the pattern. Punyashloka turns the liquid state of data into the materiality of liquid itself—an ink tainted by the structure of caste and a system of codification that resides not in algorithmic pathways of networks but in the hands of a community withstanding the brutality of caste and devising methods of persisting. What does a portrait of a community look like when not made with data points, or facial markers, or semantic or epidermal characters? It looks like defiant apophenia.

Another instance of defiant apophenia can be found in the work of Akshay Sethi, who plays with the mutability of a lathi stick, which is visible primarily as a tool for police or mob violence in journalistic photographs and artworks such as Saraf’s. In The Situational Irony of a Stick (2020), Sethi considers the history of how the lathi stick took form—tracing it to its colonial roots in the Indian Police Act of 1861, implemented during the British occupation of the subcontinent. It is distinct from the whipcord used by upper-caste landowners to subjugate the laborers working on their fields, evoked by Jyotirao Phule as a symbol of caste violence in Shetkaryaca Asud (Cultivator’s Whipcord), published in 1881. The lathi, notes Sethi, served to disperse bodies gathered to protest—a necessity for the continuation of colonial rule. Through drawings that mimic the format of a postage stamp—another technology introduced by the British—Sethi depicts the long life of the lathi, as it continues to debilitate protesting bodies in India today.

An extension of this enquiry is Sethi’s Sound of a Weapon (2020), a graphic narrative rendered with charcoal on paper and presented in the accordion format that intertwines two parallel templates of the stick. Struck by a viral news item that detailed how Chandrakant Hugti, the head constable at a police station in Karnataka, India, had transformed his lathi into a flute by strategically puncturing holes into its shaft, Sethi began assembling this playful revision alongside the manual for the construction of a lathi issued to the police. The illustrations and text alternately present two different processes for the use of the lathi—the prescribed and the particular. In the space between them, the possibility for reshaping the tools of power to other ends emerges. Sethi is interested in how the form of the lathi transforms in its location within varied social practices, and his research has also explored the traditional martial art of Silambam, which includes the use of a bamboo staff as the primary tool for offense. Curiously, the British banned the practice of Silambam during the colonial rule, as it had been used as a tactic in revolts led by dissident kingdoms of southern India. In 2019, however, when Hugti decided to turn his lathi into a flute, he was rewarded by the state police, his ingenuity not viewed as holding any speck of threat to the prevailing system of police violence. I do grapple with the question of whether turning a lathi into a flute is an act of freedom, of liberating the matter comprising cane, which has been crafted for the purposes of violence, from its destiny as a tool for control toward something else—music, beauty, leisure? I can only wager that these momentary acts of creativity are necessary methods of survival.

*

My Aadhaar still has that same picture. Every time someone gets a peek at it—at a bank or airport security, they do a double flip, looking at me intently and then scrutinizing the picture. It takes a couple of minutes. I relish this lag.

You should get this updated, I am told, and I nod without sincerity.

You can do it online; it’s easy now. They usually hand the card back and wave at me.

I am free to go.

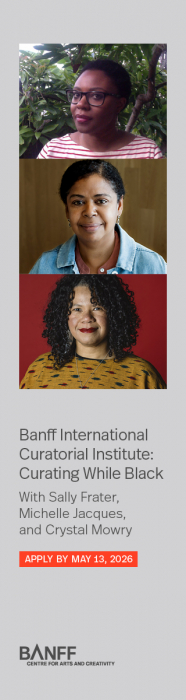

This feature was supported by the Momus / Eyebeam Critical Writing Fellowship. Arushi Vats was the 2021-22 Critical Writing Fellow.

Contribute your thoughts by leaving a reply